Which would you choose if you were diagnosed with early-stage breast cancer? You could receive a standard chemo regimen, followed three months later by an MRI scan to determine the treatment’s success. Or, instead, a genomic analysis of your biopsied breast tissue could help determine a drug regimen tailored to your own genetic makeup.

An MRI scan would assess your progress just three weeks later so, if need be, your oncologist could quickly switch to a different chemo protocol to attack the cancer.

The second approach adds several additional layers of information: your unique genetic profile, the early follow-up MRI, an ongoing assessment of your treatment’s effectiveness, and the chance to respond quickly to new information.

Now envision a truly global extension of this multilayered tactic. Beyond improving treatment protocols, this approach also offers a path to further discovery.

A scientist studying how a misfolded protein triggers a degenerative disease might see another lab’s recent results online, for example, and learn that the same protein causes a different disorder. Such a link suggests a common molecular mechanism for both conditions – a precious clue that could yield cures.

Or picture experts in disparate fields, using vastly different routes to discovery – molecular analysis, genomics, epidemiology – but each studying resistance to viral attack. With more ready access to each other’s results and to novel analytic tools that tap reservoirs of related findings, they transcend their specialties and boost the chance to see the pieces of the puzzle come together.

Such researchers are immersed in a kind of information commons, awash in a virtual library of interconnected laboratory notebooks and volumes of new analytic tools and data. With an increased ability to harvest information automatically and far more powerfully, they can more easily find the connections among discoveries that would otherwise go unrecognized.

Think of it as highly sophisticated scientific crowdsourcing. The shared information and insights create a rich “knowledge network.”

“The knowledge network is the ‘integrating center’ for precision medicine,” says Keith Yamamoto, PhD, vice chancellor for research, a professor of cellular and molecular pharmacology, and a leader of the precision medicine effort under way at UC San Francisco. “For the clinician-researcher, each added level of insight – say, finding the link between a specific genetic signature and an aggressive type of cancer – can lead to new ways to diagnose and treat the disease.”

The oncologist treating early-stage breast cancer, for example, might learn of a new online tool to refine analysis of MRI scans. Or a colleague seeing her preliminary progress may suggest a potent new strategy to screen for the best drug. Either way, the search for better treatments just got a little smarter.

“And in fundamental research,” says Yamamoto, “the network will be a ‘discovery generator,’ revealing new correlations testable in the lab or new clues to mechanisms that drive disease. The pace of research is extraordinary at many levels, but the real payoff lies where insights intersect.”

Making Connections Visible

“Ten years ago, the accumulation of new data was a warehousing problem. Now it’s a networking problem,” says Joe Hesse, a computational expert and technology strategist at UCSF’s Memory and Aging Center.

“Almost all areas of biomedical research are inundated with new information, but what we don’t have are good ways to link up the data to see the connections,” points out Kate Rankin, PhD, a postdoctoral alumna who is now an associate professor in the Memory and Aging Center and one of the prime movers in advancing the idea and reality of a knowledge network.

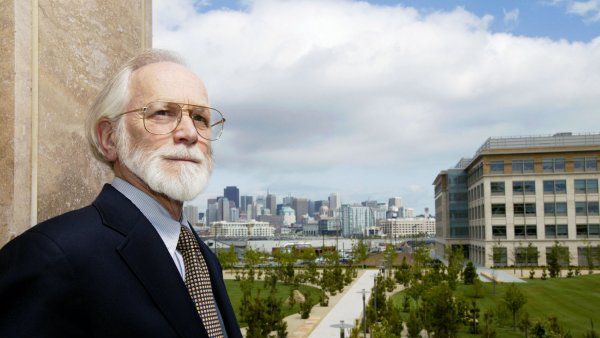

Neuroscientist Kate Rankin and technology expert Joe Hesse are collaborating to advance the knowledge network. Photo: Steve Babuljak

Rankin and a cadre of colleagues at UCSF and a few other institutions envision analytic pipelines that connect different types of data, yielding unsuspected correlations between, say, the progression of diseases and the pace of cell-cell interactions.

The new online analytic tools will be a crucial component of the knowledge network. In Rankin’s field of clinical neuroscience, scientists commonly use a visualization strategy that allows users to stack a number of MRI brain scans on top of each other and compare the same part of the brain in different patients.

She and colleagues have developed pattern-matching algorithms to identify specific diseases based on an individual’s pattern of neurologic damage. One of her goals is to link these two tools to create easy-access pipelines for both researchers and clinicians to use.

Another promising pipeline being developed mines publicly available libraries of gene functions to identify sets of genes statistically associated with specific metabolic functions. It has already been used to streamline identification of genes that are particularly active in inflammation – a condition now under increasing focus as a driver of a range of diseases, including atherosclerosis, diabetes, and cancer. Essentially, the analytic tool winnows down the candidates to an “inflammation gene set.” Researchers or clinicians can upload genomic data from a patient to learn if the telltale inflammation genes are active. If so, the patient might need treatment for inflammation even if the condition is not yet obvious.

UCSF’s Laura van ‘t Veer, PhD, who focuses on cancer diagnostics, is a leader in clinical trials to develop drug regimens tailored to discrete genetic profiles in different tumors. She fully endorses the multilevel approach to help develop new cancer treatments. She also wonders if other health complications – other levels – might affect the course of a disease.

“Yesterday I talked with a diabetes researcher. The knowledge network should help collaborators in different fields ask, ‘Does diabetes influence how normal cells get derailed in cancer?’” says

van ‘t Veer, who holds the Angela and Shu Kai Chan Endowed Chair in Cancer Research.

She also questions inflammation’s role in so many diseases. “What are the connections? What can we learn from the fact that inflammation is expressed in what we think of as very different disorders?

“The knowledge network keeps evolving,” she says. As novel tools and richer, ongoing exchanges lead to fresh insights, she explains, “we can apply new knowledge more quickly and more easily to develop the next type of treatment.”

Expanding the Knowledge Network

The goal of a fully functioning knowledge network will not be easy to reach. It will function well only with wide participation, new analytic tools, and powerful computational capacity.

Hesse cites pioneering work at the Lawrence Berkeley National Lab by Adam Arkin, PhD, who is developing a software platform called KBase that offers a template for developing a knowledge network in any field.

With an increased ability to harvest information automatically and far more powerfully, scientists can more easily find the connections among discoveries.

Arkin proposes assigning “values” to similar kinds of data from different sources, ranking the reliability of the findings based, for example, on the size of the sample, the metrics used to assess it, and the statistical power of the reported results.

“With this type of information available, one can decide the likely importance of an outlier result when compared with several others,” Hesse explains. “If the value is low because, say, the sample is small, the finding may be skewing interpretations. But if its value ranking is high, the result may warrant serious follow-up.”

Both the brain scan analytic strategy and the genetic profiler in Rankin’s neuroscience field are being programmed with the promising KBase platform as a template.

Providing ways to increase research networking would seem to be a natural for scientists. “We share our new findings at meetings and in journal articles that often get published many months after the research is complete,” Rankin says. “A true knowledge network would keep up with the pace of discovery.”

At the same time, not all researchers will want to share their data. Scientists can be very competitive, and some would certainly resist offering early access to their research. Respecting and protecting researchers’ privacy within the network poses a huge challenge.

Still, given the pace of discovery today, those who don’t share might risk being left out.

“Clinical researchers often find they don’t have large enough samples to carry out a new investigation of interest to all of them,” says Rankin. “Now it’s becoming more common for them to decide to pool their data: ‘I’ve got 34 patients, you have 47; let’s collaborate.’”

Hesse also sees the knowledge network as a natural extension of today’s online culture. “Sharing our information, experiences, and pictures online with our various personal networks has become second nature, so we’re primed to share research this way,” he says.

“We need to develop a rich online communications network that can at the same time protect privacy when it’s needed. But we will. It’s critical – and it’s inevitable.”